By rerixo Go To PostI don't really know anything about that, I was mainly thinking for ML use cases. When I say peak I just mean their influence will decline from here, not collapse. But the threat nvidia face from big companies like google developing their own TPUs is real. There are also loads of startups popping up which deal with more specialised units tailered to specific things within ML, these companies will likely be acquired making the landscape even more difficult for nvidia.

Sure, but it's not like Nvidia is just going to sit by and do nothing in that space. GPUs are used for deep learning and as I mentioned, obviously Nvidia has a edge there. For example Nvidia Voltas are used in the fastest super computer in the world. You can't just remove Nvidia out and plug somebody else in. If that were to happen like I said earlier, it would take a really long time.

Last week, the US Department of Energy and IBM unveiled Summit, America’s latest supercomputer, which is expected to bring the title of the world’s most powerful computer back to America from China, which currently holds the mantle with its Sunway TaihuLight supercomputer.

With a peak performance of 200 petaflops, or 200,000 trillion calculations per second, Summit more than doubles the top speeds of TaihuLight, which can reach 93 petaflops. Summit is also capable of over 3 billion billion mixed precision calculations per second, or 3.3 exaops, and more than 10 petabytes of memory, which has allowed researchers to run the world’s first exascale scientific calculation.

The $200 million supercomputer is an IBM AC922 system utilizing 4,608 compute servers containing two 22-core IBM Power9 processors and six Nvidia Tesla V100 graphics processing unit accelerators each. Summit is also (relatively) energy-efficient, drawing just 13 megawatts of power, compared to the 15 megawatts TaihuLight pulls in.

You're understating how deep they are in the HPC world (which ML is a subset of).

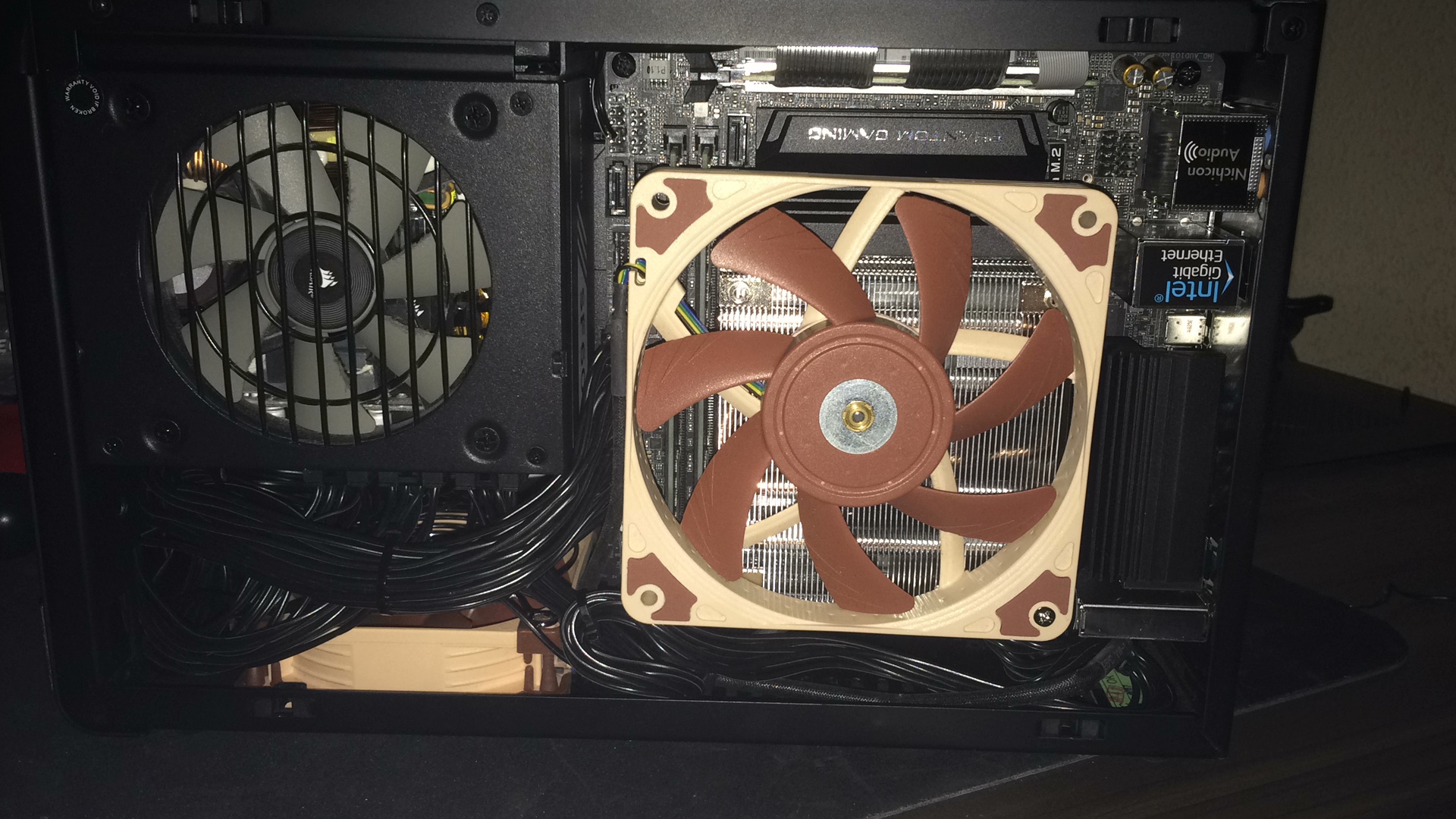

Silverstone AR11 with a NF-A12x15. I think I’ll be sticking with this going forwards, cooling isn’t as good as the AIO but the noise levels are incredible. I legitimately can’t hear it running at idle and it barely makes a sound under load.

Next up, custom Noctua coloured cables and a second 1TB SSD.

By Facism Go To Postis the fan on straight or is it the perspective triggering me here?Slightly off centre, only one screw holding it in place until I make a bracket for it.

By Rob Go To PostI forgot who said it but they weren't joking. DX12 and BF do not get along at allNow imagine when they add RTX features, which requires you run the game in DX12. Just imagine.

By Rob Go To PostI forgot who said it but they weren't joking. DX12 and BF do not get along at all

Frostbite and DX12. And yeah...the Ray tracing is going to require DX12 as well. Idk why they have such a issue with it.

By Smokey Go To PostFrostbite and DX12. And yeah…the Ray tracing is going to require DX12 as well. Idk why they have such a issue with it.Assuming diehard is right (he almost always is in these matters), it's probably related to them using a wrapper instead of adding native support.

By Zabojnik Go To Post

It's taking them forever. Looks pretty slick tho.

Ooooh I like this.

By Zabojnik Go To PostIs this going to be a replacement for LGS?

It's taking them forever. Looks pretty slick tho.

By DominicanPower Go To PostIs this going to be a replacement for LGS?Indeed.

By DominicanPower Go To Posthttps://www.amazon.com/dp/B01HU44Q7G/ref=psdc_11036491_t1_B00BOVOED8No idea. The only one I've personally used was the Zowie one: https://zowie.benq.com/en/product/accessory/cable-management/camade.html

Is this a decent bungee?

you aint shit unless you get this bungee https://www.amazon.com/ENHANCE-Gaming-Mouse-Bungee-Holder/dp/B074XKRKL1

By DominicanPower Go To Posthttps://www.amazon.com/dp/B01HU44Q7G/ref=psdc_11036491_t1_B00BOVOED8It’s probably not terrible, but the Zowie Camade is the best to get. Nice heavy base to it, doesn’t move easily. Only downside is the price, it’s expensive for what is essentially a paperweight.

Is this a decent bungee?

By NinjaFridge Go To PostUse wired mouse brehsThey're much lighter. *shrug*

By Kibner Go To PostThey're much lighter. *shrug*

The newst logitech is actually really fucking light, I kind of want to check it out. But I am still scared of the stability of Logitech mouse wheels.

By HottestVapes Go To PostIt’s probably not terrible, but the Zowie Camade is the best to get. Nice heavy base to it, doesn’t move easily. Only downside is the price, it’s expensive for what is essentially a paperweight.

I wish I had just gotten that Zowie one tbh. I got one mostly based on appearance, as it looks very inoffensive (just black without anything extremely space looking to it). But man, it just isn't very good compared to the zowie one which a friend has.

dammit, i just realized i linked the wrong damn thing because i was tired and rushed and not thinking straight. This is the Zowie bungee I used (that Vapes gave me the name of): https://zowie.benq.com/en/product/accessory/cable-management/camade.html

By Kibner Go To PostThey're much lighter. *shrug*

Depends, Logitech have two wireless mice under 90g now, think the new G Pro is 80g. Lighter than many of the popular wired mice and I think only FinalMouse Ultralight is significantly lighter (but it’s a pain to buy)

I want to try an Ultralight so bad. I still like my Vektor, though. More comfortable to use than any of the Zowie's (got to try them all at a QuakeCon), which are my second favorites atm.

By Kibner Go To PostI want to try an Ultralight so bad. I still like my Vektor, though. More comfortable to use than any of the Zowie's (got to try them all at a QuakeCon), which are my second favorites atm.

Me too. I believe they’re working on a new mouse, some collaboration they’ve done with Ninja I think. I’m cautious but hopeful that it’ll be something good and not look like Gamer trash.

Either way, it’ll take something very special for me to ditch the G305. Literally the perfect shape for my hand, and the battery life is incredible too.

By Laboured Go To Post

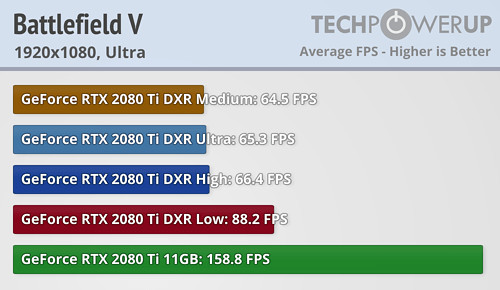

Still above 60. Looking good for a super high end feature like Ray tracing. Looking forward to trying it when I get back.

So 4k, ultra settings, dxr on low, gets you ~45fps (2080ti). I'll take it. Let gsync do it's thing 👍

just like everyone speculated, the 2070 and 2080ti is too slow with RTX for anyone to actually use it. When was the last time a new feature was anything but hype for a first gen card?

By Smokey Go To PostSo 4k, ultra settings, dxr on low, gets you ~45fps (2080ti). I'll take it. Let gsync do it's thing 👍45fps.. Smokey what are you doing

By diehard Go To Postjust like everyone speculated, the 2070 and 2080ti is too slow with RTX for anyone to actually use it. When was the last time a new feature was anything but hype for a first gen card?Didn't new DX revisions offer better performance for equivalent graphics? Or am I misremembering once again?

By Smokey Go To PostSo 4k, ultra settings, dxr on low, gets you ~45fps (2080ti). I'll take it. Let gsync do it's thing 👍Also, this is disgusting and I don't know who you are anymore.

By Kibner Go To PostDidn't new DX revisions offer better performance for equivalent graphics? Or am I misremembering once again?I think some of the earlier games with switchable modes like GTA 4 and Max Payne actually ran slightly worse with dx11 vs dx10/10.1? Games built from the ground-up with DX11 should run better, but they came so far along after the launch of Cypress and Fermi that it kind of doesn't matter?

DirectX is also just a feature set, it never really required extra die space and i don't know if people were ever really expected to pay more for a video card just because it supported a newer version.

Right now, RTX has more in common with PhysX cards than it does with newer DirectX support. That might be a little too harsh though.

I know it is an API, but it was still a new feature that was touted as much as this ray tracing feature. Remember when tessellation was a big thing NVidia advertised? https://www.nvidia.com/object/tessellation.html

By diehard Go To Postjust like everyone speculated, the 2070 and 2080ti is too slow with RTX for anyone to actually use it. When was the last time a new feature was anything but hype for a first gen card?

45fps.. Smokey what are you doing

45fps with essentially extreme of the extreme of settings? And at 4k? That's not bad. Gsync was basically created for situations like this. In practice we'll see how it works . If I didn't have a gsync monitor id be less interested in it.

Smoothing out that 40-60FPS range is really the only thing Gsync is good for, and to be fair it is really good at that. Still feel it’s pointless for higher frame rates tho

Gsync will help tearing, it won't help the stutters from running it in DX12.

a high framerate will provide far more visual appeal, not to mention competitive advantage than some better reflections and shadows. You can say a lower framerate is what gsync is for, but at the opposite end you can say that isn't what a high refresh rate monitor is for.

a high framerate will provide far more visual appeal, not to mention competitive advantage than some better reflections and shadows. You can say a lower framerate is what gsync is for, but at the opposite end you can say that isn't what a high refresh rate monitor is for.

By Smokey Go To PostSo 4k, ultra settings, dxr on low, gets you ~45fps (2080ti). I'll take it. Let gsync do it's thing 👍

bring shadows to low, turn off AA and AO and that might get up to 55-60

edit: this shyt aint worth the frames, needs to drop frames by 20% max to be worth it, and that's at ultra settings

What do y'all think about the RX 590 deal that's supposed to happen tomorrow?

I'm contemplating upgrading my 970 if the RX 590 really does come with RE2, DMCV, and The Division 2 lol

can buy a freesync monitor too instead of spending an arm and a leg on a gsync monitor.

I'm contemplating upgrading my 970 if the RX 590 really does come with RE2, DMCV, and The Division 2 lol

can buy a freesync monitor too instead of spending an arm and a leg on a gsync monitor.

the RX 590 is Polaris 30 which is Polaris 20 with a slightly improved lithography which is Polaris 10 with higher clocks. Hard to get excited for a card using essentially a GPU from mid 2016. Might be a decent value though.

Vega being designed around the availability and dropping prices of HBM2 was a mistake in hindsight.

Vega being designed around the availability and dropping prices of HBM2 was a mistake in hindsight.

Ask yourself ... what would Smokey do?

I don't know, might be worth it. How much faster is the 590 supposed to be? If it's anywhere between 20-30% and you can get it for 100-150€ (nets spend having sold your 970), then I'd say yes. Kind of doubt that'll be the case though.

Freesync monitors are cheaper, however by buying one you lock yourself into the AMD ecosystem, which has its drawbacks.

I don't know, might be worth it. How much faster is the 590 supposed to be? If it's anywhere between 20-30% and you can get it for 100-150€ (nets spend having sold your 970), then I'd say yes. Kind of doubt that'll be the case though.

Freesync monitors are cheaper, however by buying one you lock yourself into the AMD ecosystem, which has its drawbacks.

By diehard Go To Postthe RX 590 is Polaris 30 which is Polaris 20 with a slightly improved lithography which is Polaris 10 with higher clocks. Hard to get excited for a card using essentially a GPU from mid 2016. Might be a decent value though.

Vega being designed around the availability and dropping prices of HBM2 was a mistake in hindsight.

Honestly, is anybody even buying Vega? And yeah like you said it's basically a 2015 card in a 2018 shell.

idk

AMD pisses me off with their non competitiveness. They came out and said they won't let been be attempting Ray tracing until the entire line can do it. Noble I guess? But also sounds like an excuse.

I game in 1080p lol. I just want it for the 3 games I'd get. The card seems like a minor upgrade, people are saying 10-20%, which is enough for me. I'm not maxing out stuff. I'm fine with playing games in medium/high instead of ultra.

By Smokey Go To PostHonestly, is anybody even buying Vega? And yeah like you said it's basically a 2015 card in a 2018 shell.no they said that ray tracing won't take off until their whole line can do it. they'll support it in their high end models.

idk

AMD pisses me off with their non competitiveness. They came out and said they won't let been be attempting Ray tracing until the entire line can do it. Noble I guess? But also sounds like an excuse.